For Transformer models, length extrapolation is a desirable property we have been pursuing. It refers to the ability of a model trained on short sequences to be applied to long sequences without fine-tuning while maintaining good performance. The pursuit of length extrapolation is driven, on one hand, by theoretical completeness—the feeling that an ideal model should possess this property—and on the other hand, by training practicality, allowing us to train a model usable for long sequences at a lower cost (on shorter sequences).

Below, we analyze the key ideas for strengthening the length extrapolation of Transformers and derive a "super strong baseline" solution. We then use this "super strong baseline" to analyze several related research works.

Common Misconceptions

The first work to explicitly study the length extrapolation of Transformers was likely ALiBi, published in mid-2021, which is not that long ago. Why did it take so long (compared to the original Transformer publication in 2017) for someone to specialize in this topic? It is likely because, for a long time, we took it for granted that the length extrapolation of Transformers was a problem of position encoding, and that finding a better position encoding would solve it.

In fact, by comparing the extrapolation effects of existing position encodings, one can indeed find arguments to support this view. For example, several experimental results shared later show that the length extrapolation of relative position encodings is generally better than that of absolute position encodings; functional relative position encodings like RoPE tend to perform better than trainable relative position encodings. So, it seems that as long as we keep optimizing the form of position encoding, we will eventually provide Transformers with better length extrapolation and solve the problem. However, the situation is not so optimistic. While RoPE is considered a position encoding with better extrapolation capabilities, it can only extrapolate about 10% to 20% of the length before performance degrades. This ratio is far from expected; ideally, extrapolation should work for several times the original length to be considered valuable. Therefore, it is easy to imagine that relying solely on improving position encoding to enhance Transformer length extrapolation might take a very long time to achieve significant results.

Intuitively, many readers might feel that functional position encodings like Sinusoidal or RoPE, which have no trainable parameters, should have excellent length extrapolation. But in reality, this is not the case; these types of position encodings do not show any particular advantage in length extrapolation. Why is this? It is because when assuming the extrapolation of functional position encodings, people often forget its basic prerequisite—"smoothness."

In fact, extrapolation is the process of inferring the whole from the local, which should be familiar to us; Taylor series approximation is a classic example. It only needs to know the values of several derivatives of a function at a certain point to effectively estimate values within a neighborhood. It relies on the high-order smoothness of the given function (high-order derivatives exist and are bounded). But are Sinusoidal or RoPE such functions? No. They are combinations of sine and cosine functions with a phase function k/10000^{2i/d}. When 2i/d \approx 0, the function is approximately \sin k, \cos k, which are high-frequency oscillating functions with respect to the position k, rather than straight lines or functions that asymptotically approach a straight line. Therefore, the extrapolation behavior of models based on them is often difficult to predict. Can non-oscillating position encodings be designed? It is difficult; if a position encoding function does not oscillate, it often lacks sufficient capacity to encode enough position information. In a sense, the complexity of the position encoding function itself is a requirement for encoding positions.

Super Strong Baseline

In fact, a more accurate definition should be:

Length extrapolation is a problem of inconsistency between training and prediction lengths.

Specifically, there are two points of inconsistency:

During prediction, position encodings that were never seen during training are used (whether absolute or relative);

During prediction, the number of tokens processed by the attention mechanism far exceeds the number during training.

The first point is easy to understand: if it wasn’t trained, there’s no guarantee it can be handled well. This is a realistic phenomenon in Deep Learning, even for functional position encodings like Sinusoidal or RoPE. Regarding the second point, readers might be confused: theoretically, can’t Attention handle sequences of any length? What does the inconsistency between training and prediction length affect? The answer is entropy. We analyzed this in "Looking at Attention’s Scale Operation from Entropy Invariance". More tokens averaging the attention means the final distribution is relatively more "uniform" (higher entropy), i.e., attention is more dispersed. Conversely, a short training length means the attention entropy is lower and attention is more concentrated. This is also a difference between training and prediction that affects performance.

In fact, for a Transformer model with relative position encoding, a very simple Attention Mask can solve both of the above problems at once and achieve near-SOTA results:

It is not hard to understand that this turns the Attention during prediction into a local Attention, where each token can only see a number of tokens equal to the training length. In this way, the number of tokens each token can see is consistent with training, solving the second problem. At the same time, because it uses relative position encoding and the position count starts from the current token as the origin, such local Attention will not use more unknown encodings than during training, solving the first problem. Therefore, this simple Attention Mask solves the two difficulties of length extrapolation at once without retraining the model. What is even more amazing is that various experimental results show that if this is used as a baseline, the relative improvement of various similar works becomes meager. That is, it is already very close to SOTA, making it a fast and effective "super strong baseline."

Paper Review

Since ALiBi, many works have indeed been devoted to the study of Transformer length extrapolation. In this section, I have studied and organized some representative works. From these, we can see that they basically share many similarities with the baseline model, and can even be said to be variants of the baseline model to some extent. From this, we will further appreciate the profound connection between length extrapolation and the locality of attention.

ALiBi

As the "pioneering work," ALiBi cannot be ignored. It comes from the paper "Train Short, Test Long: Attention with Linear Biases Enables Input Length Extrapolation". In hindsight, the modification made by ALiBi is very simple; it just changes the Attention calculation before Softmax from \boldsymbol{q}_m^{\top}\boldsymbol{k}_n to: \begin{equation} \boldsymbol{q}_m^{\top}\boldsymbol{k}_n - \lambda|m - n|\label{eq:alibi} \end{equation} where \lambda > 0 is a hyperparameter, with different values set for each head. From this definition, one can see the similarity between ALiBi and the baseline model. Both subtract a non-negative matrix before Softmax, but the subtracted non-negative matrices are different. ALiBi can be seen as a "smooth version" of the baseline model:

ALiBi is a very simple (and of course effective) smooth local attention trick. However, if it is understood as "position encoding," it might not be quite appropriate. If Equation [eq:alibi] is generalized to bidirectional attention, then because |m - n|=|n - m|, the model would theoretically be unable to distinguish "left" from "right" and could only recognize the relative distance, which is clearly insufficient for accurately identifying position information. As for its good performance on unidirectional language models, it is because unidirectional language models can achieve non-trivial results (significantly higher than random) even without any position encoding. The local attention imposed by ALiBi serves to enhance locality, fitting the characteristics of the language modeling task itself.

KERPLE

KERPLE comes from the paper "KERPLE: Kernelized Relative Positional Embedding for Length Extrapolation". It is essentially a simple generalization of ALiBi, introducing two trainable parameters r_1, r_2 to generalize Equation [eq:alibi]: \begin{equation} \left\{\begin{aligned}&\boldsymbol{q}_m^{\top}\boldsymbol{k}_n - r_1|m - n|^{r_2} ,\qquad\qquad r_1 >0, 0 < r_2 \leq 2\\ &\boldsymbol{q}_m^{\top}\boldsymbol{k}_n - r_1\log(1+r_2|m - n|),\qquad\qquad r_1, r_2 > 0 \end{aligned}\right.\label{eq:kerple} \end{equation} With generalization and trainable parameters, it is not surprising that KERPLE achieves better results than ALiBi. However, I must severely criticize the KERPLE paper for its pretentiousness. According to the layout, the third section of the original paper is the theoretical support, but it is clearly an irrelevant section introduced just to forcibly increase the mathematical depth of the article. In substance, it does not help in understanding KERPLE at all and might even decrease the reader’s interest (to put it bluntly, it serves the reviewers, not the readers).

Sandwich

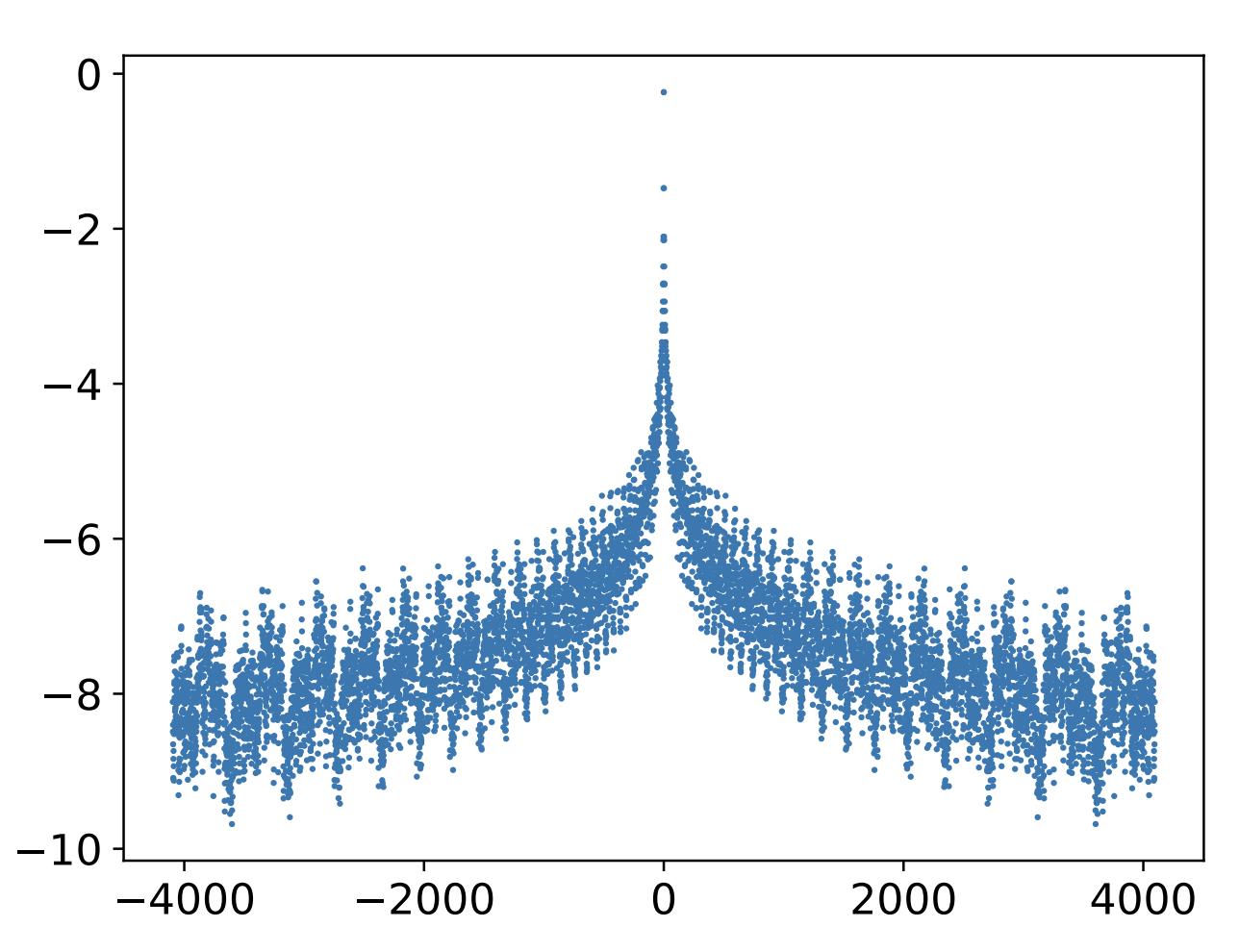

Sandwich was also developed by the authors of KERPLE, from "Receptive Field Alignment Enables Transformer Length Extrapolation", which was posted on Arxiv just last month. It replaces Equation [eq:alibi] with: \begin{equation} \boldsymbol{q}_m^{\top}\boldsymbol{k}_n + \lambda\boldsymbol{p}_m^{\top}\boldsymbol{p}_n\label{eq:sandwich} \end{equation} where \boldsymbol{p}_m, \boldsymbol{p}_n are Sinusoidal position encodings, and \lambda > 0 is a hyperparameter. From "Transformer Upgrade Road: 1. Tracing the Source of Sinusoidal Position Encoding", we know that \boldsymbol{p}_m^{\top}\boldsymbol{p}_n is a scalar function of m-n, and on average, it is a monotonically decreasing function of |m-n|. Therefore, its effect is similar to -\lambda|m-n|. The reason for emphasizing "on average" is that \boldsymbol{p}_m^{\top}\boldsymbol{p}_n as a whole is not strictly monotonic but oscillates downwards, as shown in the figure:

If necessary, we can also convert Sandwich into a form like RoPE where "absolute position encoding implements relative position encoding." This only requires noting that: \begin{equation} \boldsymbol{q}_m^{\top}\boldsymbol{k}_n + \lambda\boldsymbol{p}_m^{\top}\boldsymbol{p}_n = \left[\boldsymbol{q}_m, \sqrt{\lambda}\boldsymbol{p}_m\right]^{\top}\left[\boldsymbol{k}_n, \sqrt{\lambda}\boldsymbol{p}_n\right] \end{equation} In other words, Sandwich supplements absolute position information through concatenation, and the Attention result is equivalent to relative position encoding. However, it seems this conversion currently only has theoretical value because concatenation increases the vector dimension, which in turn increases the computational cost of Attention.

XPOS

XPOS comes from the paper "A Length-Extrapolatable Transformer", which appeared on Arxiv on the same day as Sandwich. It is a direct generalization of RoPE. We know that the basic solution for RoPE is: \begin{equation} \boldsymbol{q}_m\to \boldsymbol{\mathcal{R}}_m\boldsymbol{q}_m,\quad \boldsymbol{k}_n\to \boldsymbol{\mathcal{R}}_n\boldsymbol{k}_n \end{equation} where \boldsymbol{\mathcal{R}}_n=\begin{pmatrix}\cos n\theta & -\sin n\theta\\ \sin n\theta & \cos n\theta\end{pmatrix}. When I derived RoPE, I assumed that "Q and K undergo the same transformation." In fact, from the perspective of "absolute position implementing relative position," there is no need to restrict both transformations to be identical. For example, XPOS considers: \begin{equation} \boldsymbol{q}_m\to \boldsymbol{\mathcal{R}}_m\boldsymbol{q}_m \xi^m,\quad \boldsymbol{k}_n\to \boldsymbol{\mathcal{R}}_n\boldsymbol{k}_n \xi^{-n} \end{equation} where \xi is a scalar hyperparameter. Thus: \begin{equation} (\boldsymbol{\mathcal{R}}_m\boldsymbol{q}_m \xi^m)^{\top}(\boldsymbol{\mathcal{R}}_n\boldsymbol{k}_n \xi^{-n}) = \boldsymbol{q}_m^{\top}\boldsymbol{\mathcal{R}}_{n-m}\boldsymbol{k}_n \xi^{m-n} \end{equation} The total result still only depends on the relative position m-n. However, the problem now is that the exponent is m-n rather than |m-n|. As long as \xi \neq 1, one side will always diverge. The cleverness of XPOS is that it chooses the same scenario as many related works—focusing only on unidirectional language models—so we only use the attention part where m \geq n! In this case, simply choosing \xi \in (0,1) achieves the effect of decay with relative distance.

In fact, the additional exponential decay term \xi^m is not an original creation of XPOS. In the literature I have read, the same term first appeared in PermuteFormer, although PermuteFormer mainly focused on linear Attention scenarios. In terms of details, XPOS assigns different \xi to each block, but in private communication with the author, the author performed experiments sharing the same \xi and found that the improvement from setting different \xi was almost negligible. Additionally, we must appropriately control the value of \xi to prevent \xi^{-n} from overflowing when n is large.

It is worth noting that the decay with relative distance here is directly multiplied by the Attention Score before Softmax. The result is that Scores for larger relative distances become very close to 0, rather than tending toward negative infinity as in the previous designs. e^0 does not have the effect of tending toward zero. Therefore, such a design is not a variant of local Attention, and thus its performance does not reach SOTA. To make up for this gap, XPOS designed a special local Attention (called Blockwise Causal Attention, or BCA), which, when added, can fill this gap. During communication, the author stated that BCA was used because of implementation advantages; in reality, the local Attention of the baseline model works better. So, for extrapolation, one must look at local attention.

The experiments in the original paper are very rich and worth referencing; I recommend everyone read it carefully!

Summary

This article summarizes related work on enhancing the length extrapolation capability of Transformers, including a simple but powerful baseline solution and several papers focusing on length extrapolation. From these, we can find that these works are essentially variants of the baseline solution—local attention. Local attention is one of the key links to length extrapolation.

If you repost, please include the original address: https://kexue.fm/archives/9431

For more detailed reposting matters, please refer to: "Scientific Space FAQ"