As is well known, in both the text and visual domains, various generative models are “running amok” across the internet with an unstoppable momentum. Although everyone understands that achieving true Artificial General Intelligence (AGI) still has a long way to go, this does not prevent people from increasingly using generative models to create and share content. It is plain to see that many online articles are already accompanied by illustrations generated by Stable Diffusion models; it is plain to see that many news styles are increasingly showing the shadow of ChatGPT. This seemingly harmless trend is quietly raising a question: Should we remain vigilant about the flood of generative model data on the internet?

A recently published paper, “Self-Consuming Generative Models Go MAD,” reveals a worrying possibility: the unchecked expansion of generative models on the internet might lead to a digital version of a “Mad Cow Disease” epidemic. In this article, we will study this paper together and explore its potential impacts.

“Eating Oneself”

On one hand, the increasing frequency with which people use generative models will lead to more and more content on the internet being created by these models. On the other hand, generative models are also iteratively updated, and the data they use is crawled from the internet. One can imagine that in future training sets, the proportion of content created by generative models will become higher and higher. In other words, each subsequent generation of models may not have enough fresh data during iteration and will purely train on data produced by themselves—or as the Cantonese saying goes, “eating oneself.” This will lead to a decline in the quality or diversity of the models, which the original paper calls “Model Autophagy Disorder (MAD).”

Coincidentally, a similar example has appeared in biology. Cows are herbivores; however, to enhance their nutritional supply, some livestock farmers ground up the remains of other cattle (including brains) and mixed them into the feed. This seemed like a clever practice at the time, but it eventually led to the emergence and large-scale spread of “Mad Cow Disease” (BSE). This case illustrates that long-term “self-consumption” can lead to the accumulation of harmful factors within an organism, which, once reaching a certain level, can even trigger catastrophic diseases.

Therefore, we also need to reflect on whether the “rampage” of generative models will trigger another version of “Mad Cow Disease” on the internet—this could not only lead to the homogenization of information, making various contents become repetitive and lacking in originality and diversity, but also potentially trigger a series of unforeseen problems.

Reducing Diversity

Some readers might wonder: Isn’t a generative model just a simulation of the real data distribution? Even if data from generative models is used continuously for iterative training, shouldn’t it just be repeatedly presenting the real data distribution? How could it lead to a loss of diversity?

The reasons for this are multifaceted. First, the data used to train generative models is often not taken directly from the real distribution but is processed by humans, such as through denoising, normalization, and alignment. After processing, the training set has already lost some diversity. For example, the reason we observe many news reports or Zhihu answers having a “ChatGPT flavor” is not because of the content itself, but because their format is similar to ChatGPT’s, which indicates that ChatGPT’s training data and output styles are quite distinct and limited. Furthermore, to reduce the difficulty of training image generation models, we usually need to align the images. For instance, when training face generation models, it is often necessary to align everyone’s eyes to the same position; these operations also lead to a loss of diversity.

In addition, a crucial factor is that due to the limitations of the generative models themselves or training techniques, no generative model can be perfect. At this point, we usually actively introduce techniques that sacrifice diversity to improve generation quality. For example, for generative models like GANs or Flow, we choose to reduce the variance of the sampling noise to obtain higher-quality generation results—this is the so-called truncation trick or annealing trick. Additionally, as described in “Talk on Generative Diffusion Models (9): Conditional Control of Generation Results,” we usually introduce conditional information in diffusion models to control the output. Whether it is the Classifier-Guidance or Classifier-Free scheme, the introduction of extra conditions also limits the diversity of the generated results. In short, when generative models are not perfect, we actively give up some diversity in the process of balancing quality and diversity.

Normal Distribution

To gain a deeper understanding of this phenomenon, we will next explore some specific examples. To start, we first consider the normal distribution because it is simple enough that the solution and analysis are clearer. However, as we will see later, the results are already representative enough.

Assume the true distribution is a multivariate normal distribution \mathcal{N}(\boldsymbol{\mu}_0,\boldsymbol{\Sigma}_0), and the distribution we use for modeling is also a normal distribution \mathcal{N}(\boldsymbol{\mu},\boldsymbol{\Sigma}). Then the process of training the model is to estimate the mean vector \boldsymbol{\mu} and the covariance matrix \boldsymbol{\Sigma} from the training set. Next, we assume that each generation of the generative model is trained using only the data created by the previous generation. This is an extreme assumption, but it is undeniable that as generative models become more popular, this assumption becomes closer to reality.

Under these assumptions, we sample n samples \boldsymbol{x}_{t-1}^{(1)},\boldsymbol{x}_{t-1}^{(2)},\cdots,\boldsymbol{x}_{t-1}^{(n)} from the (t-1)-th generation model \mathcal{N}(\boldsymbol{\mu}_{t-1},\boldsymbol{\Sigma}_{t-1}) to train the t-th generation model: \begin{equation} \boldsymbol{\mu}_t = \frac{1}{n}\sum_{i=1}^n \boldsymbol{x}_{t-1}^{(i)},\quad \boldsymbol{\Sigma}_t=\frac{1}{n-1} \sum_{i=1}^n \big(\boldsymbol{x}_{t-1}^{(i)} - \boldsymbol{\mu}_t\big)\big(\boldsymbol{x}_{t-1}^{(i)} - \boldsymbol{\mu}_t\big)^{\top} \end{equation} Note that if the truncation trick is added, the t-th generation model becomes \mathcal{N}(\boldsymbol{\mu}_t,\lambda\boldsymbol{\Sigma}_t), where \lambda\in(0,1). Thus, one can imagine that the variance (diversity) of each generation will decay at a rate of \lambda, eventually becoming zero (complete loss of diversity). If we don’t use the truncation trick (i.e., \lambda=1), would everything be fine? Not necessarily. According to the definition \boldsymbol{\mu}_t = \frac{1}{n}\sum_{i=1}^n \boldsymbol{x}_{t-1}^{(i)}, since \boldsymbol{x}_{t-1}^{(i)} are all obtained through random sampling, \boldsymbol{\mu}_t is also a random variable. According to the additivity of the normal distribution, it actually follows: \begin{equation} \boldsymbol{\mu}_t \sim \mathcal{N}\left(\boldsymbol{\mu}_{t-1},\frac{1}{n}\boldsymbol{\Sigma}_{t-1}\right)\quad\Rightarrow\quad\boldsymbol{\mu}_t \sim \mathcal{N}\left(\boldsymbol{\mu}_0,\frac{t}{n}\boldsymbol{\Sigma}_0\right) \end{equation} It can be foreseen that when t is large enough, \boldsymbol{\mu}_t itself will significantly deviate from \boldsymbol{\mu}_0, which corresponds to a collapse in quality, not just a reduction in diversity.

In general, the introduction of the truncation trick will greatly accelerate the speed of diversity loss. Even without the truncation trick, in long-term iterative training with finite samples, the generated distribution may significantly deviate from the original true distribution. Note that the assumptions made in this normal distribution example are much weaker than those of general generative models—at least its fitting capability is guaranteed to be sufficient—yet it still inevitably leads to diversity decay or quality collapse. For real-world data and generative models with limited capabilities, it would theoretically only be worse.

Generative Models

For actual generative models, theoretical analysis is difficult to perform, so results can only be explored through experiments. The original paper conducted very rich experiments, and the results are basically consistent with the conclusions of the normal distribution: if the truncation trick is added, diversity will be lost rapidly; even without the truncation trick, the model after repeated iterations will inevitably show some deviations.

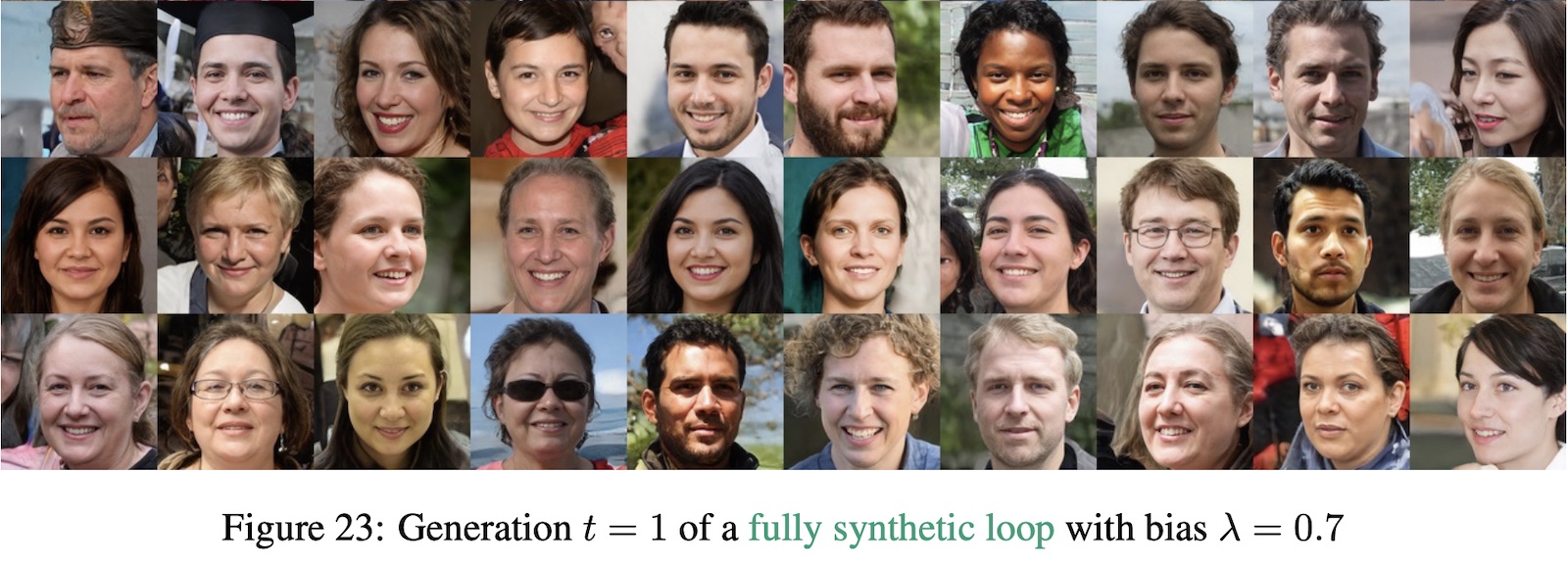

Here is an example with the truncation trick:

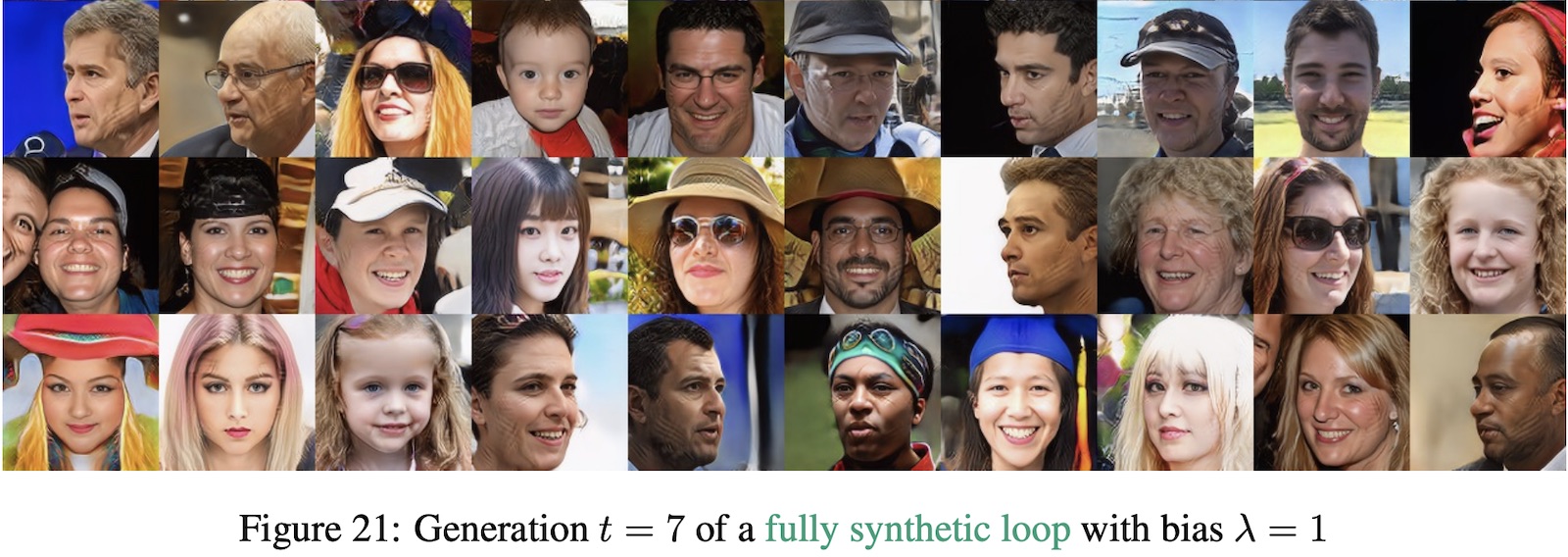

And here is an example without the truncation trick:

Of course, the assumption that “each round of iteration only uses data generated by the previous round’s model” is quite extreme. The original paper also analyzed cases where each round includes a certain amount of real data. This situation includes two sub-cases: 1. The sampling results of real data are constant from the beginning; 2. Fresh real data can be sampled for each iteration. The first method is easier to implement, but the original paper shows it can only slow down the rate of degradation and cannot fundamentally solve the problem. Although the second method can solve the degradation problem, in a practical context, it is very difficult for us to effectively filter between real data and model-generated data.

Summary

This article explored the potential consequences when various generative models “run amok” on the internet on a large scale. When generative models repeatedly update and iterate using data they generated themselves, it may lead to serious information homogenization and loss of diversity, similar to the “Mad Cow Disease” that once appeared due to “cows eating cows.”