For readers concerned with how to extend the context length of Large Language Models (LLMs), last week was undoubtedly an exciting one, with the open-source community continuously producing exhilarating results. First, the user @kaiokendev experimented with a "positional linear interpolation" scheme in his project SuperHOT, showing that with very little fine-tuning on long texts, existing LLMs can handle long contexts. Almost simultaneously, Meta proposed the same idea and published a paper titled "Extending Context Window of Large Language Models via Positional Interpolation" with extensive experimental results. The surprises did not stop there; subsequently, user @bloc97 proposed NTK-aware Scaled RoPE, achieving the effect of extending context length without any fine-tuning!

All these developments, especially NTK-aware Scaled RoPE, forced me to rethink the meaning of RoPE. After analysis, I discovered that the construction of RoPE can be viewed as a \beta-ary encoding. From this perspective, these advancements in the open-source community can be understood as different ways of expanding the base encoding.

Base Representation

Suppose we have an integer n less than 1000 (excluding 1000) that needs to be input into a model as a condition. What is the best way to do this?

The most naive idea is to input it directly as a one-dimensional floating-point vector. However, the range from 0 to 999 spans nearly a thousand units, which is not easy for gradient-based optimizers to handle. What about scaling it to between 0 and 1? That is also not ideal, because the difference between adjacent numbers would change from 1 to 0.001, making it difficult for the model and the optimizer to distinguish between them. Generally speaking, gradient-based optimizers are somewhat "finicky"; they can only handle inputs that are neither too large nor too small.

To avoid this problem, we need to devise a new input method. When we don’t know how to let a machine handle it, we might as well think about how humans do it. For an integer like 759, this is a three-digit number in base-10, where each digit is between 0 and 9. Since we use base-10 to represent numbers ourselves, why not input the base-10 representation directly into the model? That is, we input the integer n as a three-dimensional vector [a, b, c], where a, b, c are the hundreds, tens, and units digits of n, respectively. In this way, we reduce the span of the numbers without shrinking the gap between adjacent numbers, at the cost of increasing the input dimension—conveniently, neural networks are good at processing high-dimensional data.

If we want to further reduce the span of the numbers, we can further reduce the base of the number system, such as using base-8, base-6, or even binary (base-2), at the cost of further increasing the input dimension.

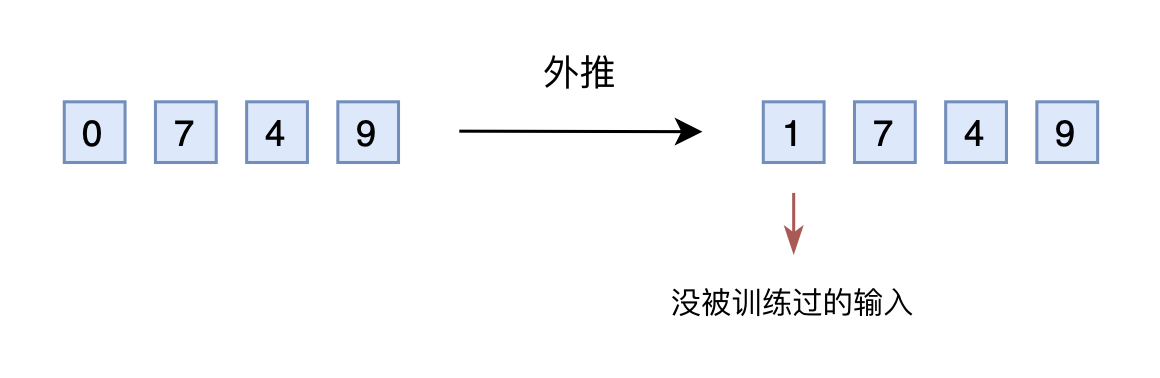

Direct Extrapolation

Suppose we trained a model using a three-dimensional base-10 representation, and the model performs well. Then a new requirement arises: increase the upper limit of n to 2000. How should we handle this?

If we still use the base-10 representation as a vector input, the input would now be a four-dimensional vector. However, the original model was designed and trained for three-dimensional vectors, so it cannot handle the additional dimension. Some readers might ask, why not reserve enough dimensions in advance? Yes, we can reserve a few extra dimensions, set them to 0 during the training phase, and change them to other numbers during the inference phase. This is Extrapolation.

However, the dimensions reserved during training are always 0. If they are changed to other numbers during inference, the performance may not be good because the model may not have the ability to adapt to situations it hasn’t been trained on. In other words, because the training data for certain dimensions is insufficient, direct extrapolation usually leads to a serious degradation in model performance.

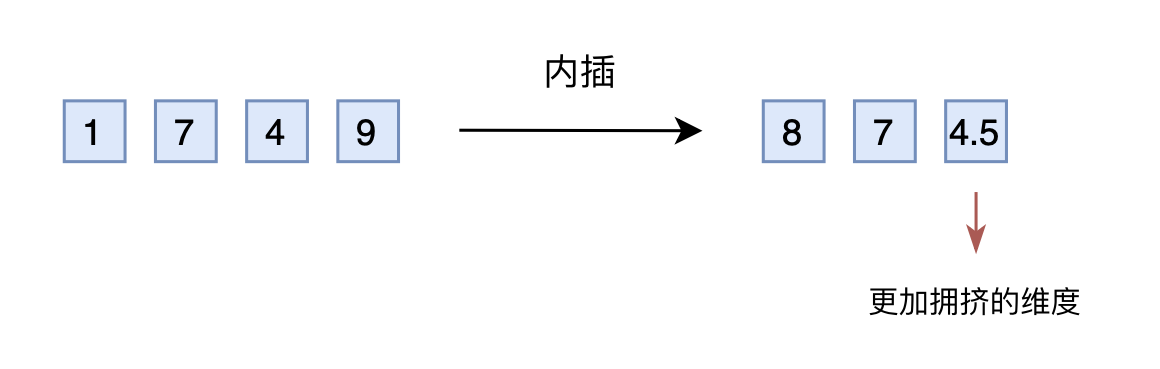

Linear Interpolation

Thus, some people thought of changing extrapolation to Interpolation. Simply put, this involves compressing the range of 2000 into 1000. For example, by dividing by 2, 1749 becomes 874.5, which is then converted into a three-dimensional vector [8, 7, 4.5] and input into the original model. In terms of absolute values, the new [7, 4, 9] actually corresponds to 1498, which is twice the original value, meaning the mapping is inconsistent. In terms of relative values, the original gap between adjacent numbers was 1, but now it is 0.5, making the last dimension more "crowded." Therefore, after making interpolation modifications, fine-tuning is usually required so that the model can re-adapt to the crowded mapping relationship.

Of course, some readers might say that the extrapolation scheme can also be fine-tuned. Yes, but the interpolation scheme requires far fewer fine-tuning steps because, in many scenarios (such as positional encoding), relative magnitude (or ordinal information) is more important. In other words, the model only needs to know that 874.5 is larger than 874; it doesn’t need to know exactly what large number it represents. Since the original model has already learned that 875 is larger than 874, and the model itself has a certain generalization ability, learning that 874.5 is larger than 874 is not too difficult.

However, the interpolation scheme is not perfect. When the processing range increases further, the adjacent difference becomes even smaller, and this shrinkage is concentrated in the units digit, while the hundreds and tens digits still maintain an adjacent difference of 1. In other words, the interpolation method makes the distribution of different dimensions inconsistent, making each dimension unequal, which increases the difficulty for the model to learn further.

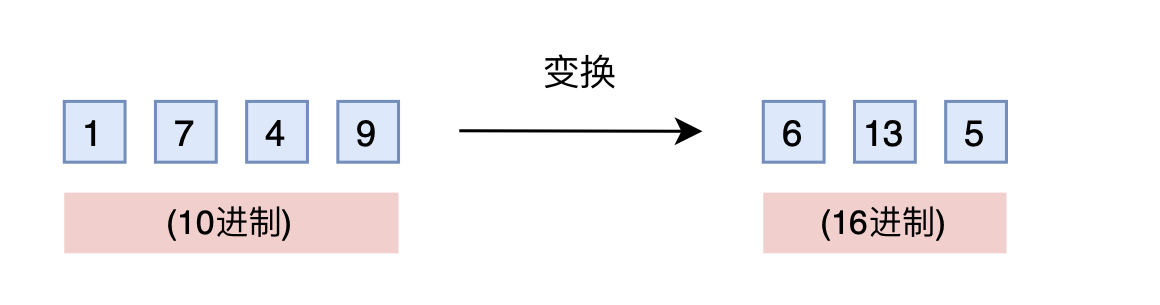

Base Conversion

Is there a scheme that does not require adding dimensions and maintains the adjacent gap? Yes, and it’s something we are very familiar with: base conversion! A three-digit base-10 encoding can represent 0–999. What if we use base-16? It can represent up to 16^3 - 1 = 4095 > 1999. Therefore, by simply converting to base-16, such as 1749 becoming [6, 13, 5], a three-dimensional vector can cover the target range, at the cost of the digits in each dimension changing from 0–9 to 0–15.

If you think about it carefully, you will realize this is a brilliant idea. As mentioned earlier, the scenarios we care about mainly utilize ordinal information. The original trained model has already learned 875 > 874, and in base-16, 875 > 874 still holds; the comparison rules are exactly the same (the model has no idea what base you are using). The only concern is whether the model can still compare normally when the digits in each dimension exceed 9 (i.e., 10–15), but in fact, general models have a certain generalization ability, so extrapolating each dimension slightly outward is fine. Therefore, this base conversion idea might even be effective without fine-tuning the original model! Furthermore, to further narrow the extrapolation range, we can use a smaller base like \lceil\sqrt[3]{2000}\rceil = 13 instead of base-16.

Next, we will see that this idea of base conversion actually corresponds to the NTK-aware scaled RoPE mentioned at the beginning of the article!

Positional Encoding

To establish the connection between them, we first need to establish the following result:

The Rotary Positional Encoding (RoPE) of position n is essentially a \beta-ary encoding of the number n!

This might seem surprising because the two appear quite different. However, the operations of both share the same key properties. To understand this, let’s first recall how to find the m-th digit (counting from right to left) of a number n in its \beta-ary representation: \begin{equation} \left\lfloor\frac{n}{\beta^{m-1}}\right\rfloor \bmod \beta \label{eq:mod} \end{equation} That is, first divide by the (m-1)-th power of \beta, and then find the modulus (remainder). Now let’s recall RoPE. Its construction is based on Sinusoidal positional encoding, which can be rewritten as: \begin{equation} \left[\cos\left(\frac{n}{\beta^0}\right), \sin\left(\frac{n}{\beta^0}\right), \cos\left(\frac{n}{\beta^1}\right), \sin\left(\frac{n}{\beta^1}\right), \dots, \cos\left(\frac{n}{\beta^{d/2-1}}\right), \sin\left(\frac{n}{\beta^{d/2-1}}\right)\right] \label{eq:sinu} \end{equation} where \beta = 10000^{2/d}. Now, comparing Equation [eq:mod] and Equation [eq:sinu], doesn’t Equation [eq:sinu] also have the exact same \frac{n}{\beta^{m-1}}? As for the modulo operation, its most important characteristic is periodicity. Aren’t \cos and \sin in Equation [eq:sinu] also periodic functions? Therefore, aside from the insignificant difference of the floor function, RoPE (or Sinusoidal positional encoding) is actually a \beta-ary encoding of the number n!

Having established this connection, the expansion schemes for the integer n discussed in the previous sections can be mapped to the various advancements mentioned at the beginning of the article. Among them, the direct extrapolation scheme changes nothing. The interpolation scheme replaces n with n/k, where k is the expansion factor; this is the Positional Interpolation experimented with in Meta’s paper, where experimental results also proved that extrapolation indeed requires more fine-tuning steps than interpolation.

As for base conversion, to expand the representation range by k times, the original \beta-ary base must be expanded to at least a \beta(k^{2/d})-ary base (although Equation [eq:sinu] is a d-dimensional vector, \cos and \sin appear in pairs, so it is equivalent to a d/2-digit \beta-ary representation; thus we take the (d/2)-th root rather than the d-th root). Equivalently, the original base 10000 is replaced by 10000k. This is essentially NTK-aware Scaled RoPE. As discussed before, since positional encoding relies more on ordinal information and base conversion basically does not change the rules for comparing order, NTK-aware Scaled RoPE can achieve good results on longer contexts even without fine-tuning.

Tracing the Origin

Some readers might wonder, what does this have to do with NTK? NTK stands for "Neural Tangent Kernel," which we briefly touched upon in "Optimization Algorithms from a Dynamical Perspective (7): SGD \approx SVM?". The relationship between the above results and NTK is more due to the proposer’s academic background. The proposer is familiar with results such as "Fourier Features Let Networks Learn High Frequency Functions in Low Dimensional Domains", which uses NTK-related results to prove that neural networks cannot directly learn high-frequency signals. The solution is to transform them into Fourier features—the form of which is similar to the Sinusoidal positional encoding in Equation [eq:mod].

Therefore, based on intuition from NTK-related results, the proposer derived NTK-aware Scaled RoPE. I consulted the proposer about his derivation, and it is actually quite simple: it combines extrapolation and interpolation—high-frequency extrapolation and low-frequency interpolation. Specifically, the lowest frequency in Equation [eq:sinu] is the \frac{n}{\beta^{d/2-1}} term. Introducing a parameter \lambda makes it \frac{n}{(\beta\lambda)^{d/2-1}}. To make it consistent with interpolation: \begin{equation} \frac{n}{(\beta\lambda)^{d/2-1}} = \frac{n/k}{\beta^{d/2-1}} \end{equation} Solving this gives \lambda = k^{2/(d-2)}. As for the highest frequency term \frac{n}{\beta}, after introducing \lambda it becomes \frac{n}{\beta\lambda}. Since d is usually very large, \lambda is very close to 1, so it remains close to \frac{n}{\beta}, which is equivalent to extrapolation.

Thus, this scheme cleverly combines extrapolation and interpolation. Furthermore, since d is relatively large (64 for BERT, 128 for LLaMA), k^{2/(d-2)} is not much different from k^{2/d}, so it is basically consistent with the k^{2/d} solution I proposed based on the base conversion idea. Also, from the proposer’s perspective, any scheme that achieves "high-frequency extrapolation, low-frequency interpolation" is acceptable, not just the scheme of introducing \lambda mentioned above. Readers can try this for themselves.

Personal Testing

As a scheme that claims to increase the context length of LLMs without fine-tuning, I was shocked when I first saw NTK-aware Scaled RoPE and couldn’t wait to test it. After all, according to the experience in "Transformer Upgrade Road: 9. A New Idea for Global Length Extrapolation", many mainstream schemes failed on my preferred "GAU + Post Norm" combination. So how does this method perform?

When k=8, the comparison results are as follows (for the difference between "Repeat" and "Non-repeat," please refer to here):

| Test Length | 512 (Train) | 4096 (Repeat) | 4096 (Non-repeat) |

|---|---|---|---|

| Baseline | 49.41% | 24.17% | 23.16% |

| Baseline-\log n | 49.40% | 24.60% | 24.02% |

| PI-RoPE | 49.41% | 15.04% | 13.54% |

| PI-RoPE-\log n | 49.40% | 14.99% | 16.51% |

| NTK-RoPE | 49.41% | 51.28% | 39.27% |

| NTK-RoPE-\log n | 49.40% | 61.71% | 43.75% |

The results reported above are all without long-text fine-tuning. "Baseline" is extrapolation, "PI" (Positional Interpolation) is the baseline modified with interpolation, and "NTK-RoPE" is the baseline modified with NTK-aware Scaled RoPE. The options with "\log n" refer to adding the scale from "Attention Scale Operation from the Perspective of Entropy Invariance" during pre-training. I considered this variant because I felt that while NTK-RoPE solves the length generalization problem of RoPE, it does not solve the problem of attention dispersion.

The experimental results in the table fully meet expectations:

1. Direct extrapolation performance is poor;

2. Interpolation without fine-tuning also performs poorly;

3. NTK-RoPE achieves non-trivial (though slightly degraded) extrapolation results without fine-tuning;

4. Adding \log n to concentrate attention indeed helps.

Thus, NTK-RoPE has successfully become the second scheme I have tested that can extend the context length of LLMs without fine-tuning (the first being NBCE). Once again, I applaud the proposer’s excellent insight! Even more gratifying is that NTK-RoPE performs significantly better on "Repeat" extrapolation than on "Non-repeat" extrapolation, indicating that this modification preserves global dependencies rather than simply localizing attention.

Final Thoughts

This article understands RoPE from the perspective of \beta-ary encoding and uses this to introduce some current developments in the open-source community regarding Long Context, including a modification scheme that increases context length without fine-tuning.

In just one week, the progress of Long Context in the open-source community has been overwhelming and gratifying, leading user @ironborn123 to comment:

Last week looks like the revenge of the interpolators :) ClosedAI better watch out.