Never would I have thought that the Bias term could be linked to the length extrapolation of Transformers!

Length extrapolation is an ideal property we hope Transformers possess. I have systematically introduced this issue in "The Road to Transformer Upgrade: 7. Length Extrapolation and Local Attention" and "The Road to Transformer Upgrade: 8. Length Extrapolation and Positional Robustness". As for the Bias term (offset term), the current mainstream view is that when the model is large enough, the Bias term does not play any special role. Therefore, many models choose to remove the Bias term, with Google’s T5 and PaLM being representative examples. Our subsequent RoFormerV2 and GAU-\alpha also followed this practice.

So, how are these two seemingly "completely unrelated" things connected? Can the Bias term really enhance the length extrapolation of Transformers? Let me explain.

Replacing with Bias

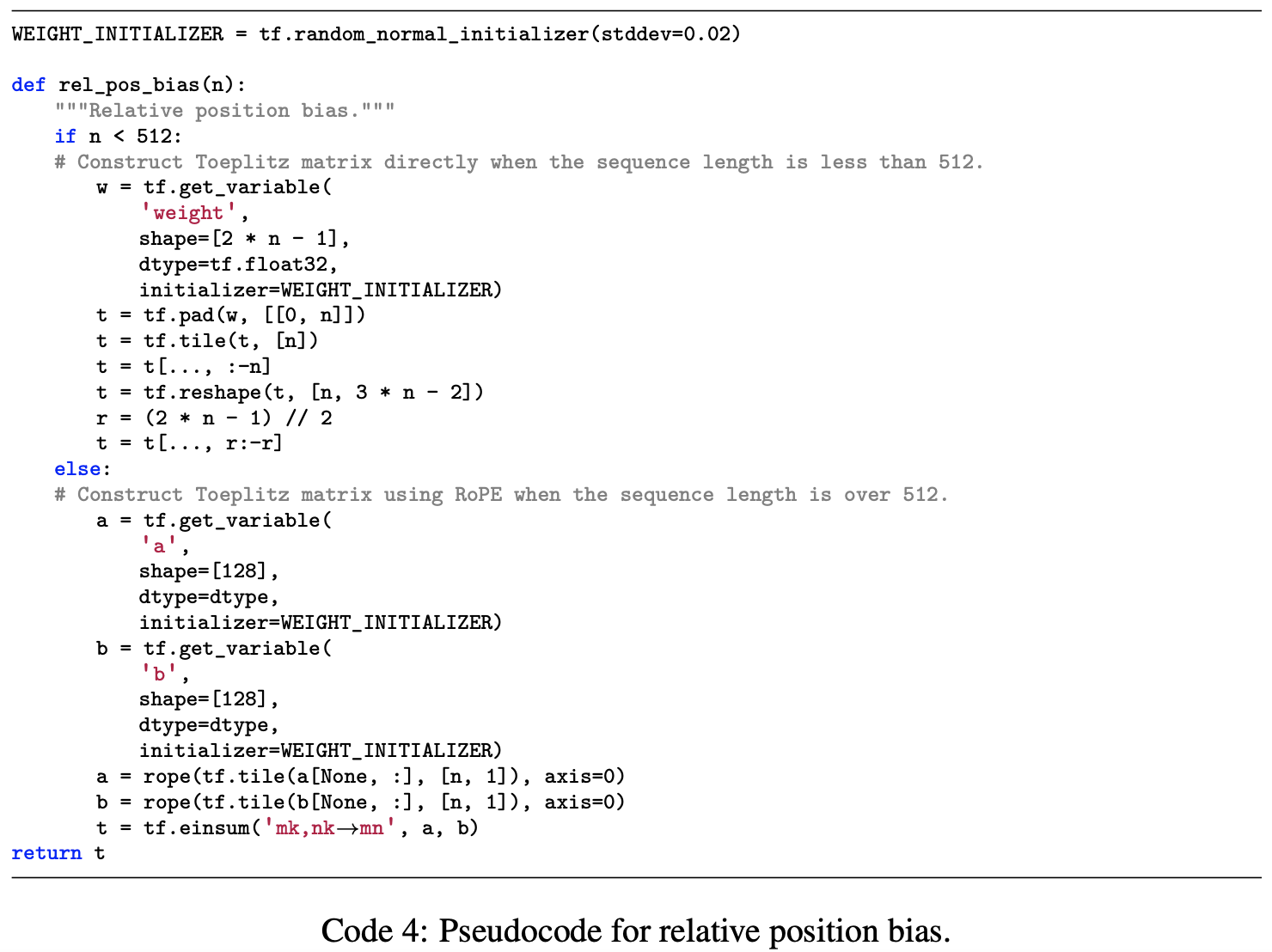

However, for me, the solution of adding an extra term to the Attention matrix to enhance length extrapolation seems less than elegant. Regardless of the original authors’ intention or the actual effect, I am not inclined to do this. Is there a similar but almost "seamless" solution? I considered that if \boldsymbol{a} and \boldsymbol{b} were the Bias terms of \boldsymbol{q}_m and \boldsymbol{k}_n respectively, it might achieve a similar effect. That is, consider: \begin{equation} \boldsymbol{q}_m^{\top}\boldsymbol{\mathcal{R}}_m^{\top}\boldsymbol{\mathcal{R}}_n\boldsymbol{k}_n \quad\to\quad (\boldsymbol{q}_m + \boldsymbol{a})^{\top}\boldsymbol{\mathcal{R}}_m^{\top}\boldsymbol{\mathcal{R}}_n(\boldsymbol{k}_n + \boldsymbol{b}) \end{equation} Obviously, simply adding a Bias term is almost "seamless" in terms of both form and computation. If this could enhance length extrapolation, it would undoubtedly be a very beautiful solution. Is it feasible? Let’s look at the expanded result: \begin{equation} \boldsymbol{q}_m^{\top}\boldsymbol{\mathcal{R}}_m^{\top}\boldsymbol{\mathcal{R}}_n\boldsymbol{k}_n + \boldsymbol{a}^{\top}\boldsymbol{\mathcal{R}}_m^{\top}\boldsymbol{\mathcal{R}}_n\boldsymbol{k}_n + \boldsymbol{q}_m^{\top}\boldsymbol{\mathcal{R}}_m^{\top}\boldsymbol{\mathcal{R}}_n\boldsymbol{b} + \boldsymbol{a}^{\top}\boldsymbol{\mathcal{R}}_m^{\top}\boldsymbol{\mathcal{R}}_n\boldsymbol{b} \label{eq:bias} \end{equation} The first and fourth terms correspond exactly to Equation [eq:rel-bias]; they are what we want. So we want to see what role the second and third terms play. If they do not have any obvious negative effects, then the approach of directly adding Bias terms at least "has hope" of achieving extrapolation effects similar to Equation [eq:rel-bias] or Sandwich.

My reasoning is as follows: as the Query and Key of Attention, \boldsymbol{q}_m and \boldsymbol{k}_n should be relatively "isotropic," meaning their directions are fairly uniform, close to uniform sampling on a sphere. Since \boldsymbol{\mathcal{R}}_m^{\top}\boldsymbol{\mathcal{R}}_n=\boldsymbol{\mathcal{R}}_{n-m} is just an orthogonal transformation, it does not change the isotropic nature of \boldsymbol{q}_m and \boldsymbol{k}_n. Thus, the two terms \boldsymbol{a}^{\top}\boldsymbol{\mathcal{R}}_m^{\top}\boldsymbol{\mathcal{R}}_n\boldsymbol{k}_n and \boldsymbol{q}_m^{\top}\boldsymbol{\mathcal{R}}_m^{\top}\boldsymbol{\mathcal{R}}_n\boldsymbol{b} are equivalent to the inner product of a vector sampled from an isotropic distribution and a fixed vector. According to our discussion in "Distribution of the Angle Between Two Random Vectors in n-Dimensional Space", the angle between two such vectors should be very close to 90 degrees. In other words, the expectation of this inner product should be 0. Therefore, the effects of the second and third terms should theoretically not be as strong as the remaining two terms.

Of course, this is just a guess. How it actually trains can only be determined through experiments. So, without further ado, I conducted the experiments.

Experimental Results

For this experiment, I chose a language modeling task. The model architecture is still the previous GAU-\alpha. The training length and batch size are both 512. The optimizer is Tiger. The only difference between the two models is whether the Bias for Q and K is enabled (other Biases are still removed).

Comparison of extrapolation effects:

| 512 | 1024 | 2048 | 4096 | |

|---|---|---|---|---|

| w/o Bias | 52.37% | 33.15% | 22.85% | 17.87% |

| w/ Bias | 52.75% | 50.99% | 45.25% | 39.55% |

As can be seen, the Bias term indeed does not affect the training performance (at length 512), but it significantly widens the gap in length extrapolation. The seemingly insignificant Bias term actually has such a magical effect! Of course, if the experiment were rerun several times, the extrapolation results might fluctuate significantly, as length extrapolation is a "bonus feature" and not something we actively triggered.

To verify whether the underlying mechanism is as we guessed, I visualized the variation of the four terms in Equation [eq:bias] for a certain layer of a sample:

It can be seen that the 4th term indeed shows a decaying trend and its magnitude is dominant. Superimposing these four terms and comparing them with the model without Bias:

In the model without Bias (blue), the Attention indeed shows a decaying trend within the training length (512), but it rises after the length increases, lacking obvious locality. This is why its extrapolation is not good enough. Conversely, consistent with the previous guess, the Attention matrix of the model with the Bias term (orange) shows a more pronounced decaying trend. In other words, its localization effect is stronger, leading to better extrapolation performance. It should be noted that the model with Bias does not show such a clear decaying trend in the Attention of every layer. Generally speaking, the decay trend is more obvious in the earlier layers and weaker in the later layers, indicating that layers closer to the input focus more on local information, which is consistent with the conclusion of "The Devil in Linear Transformer".

[Note: Later, after repeated testing, it was found that the length extrapolation results in this article are somewhat unstable in terms of reproducibility (possibly closely related to model structure, hyperparameters, etc.). Please use with discretion.]

Extended Thinking

At this point, a question arises: haven’t previous works on length extrapolation already verified that RoPE’s extrapolation is not very good? Did they all omit the Bias? To this end, I specifically investigated and found that, as expected, the "pioneering work" ALIBI and the recent XPOS both do not include Bias terms, while KERPLE and Sandwich do. Previously, when reading the papers, I always felt that the RoPE extrapolation effect in KERPLE and Sandwich seemed better than in ALIBI and XPOS. Now I can be certain that this was likely not an illusion. Since KERPLE and Sandwich both added Bias, according to the conclusion of this article, RoPE can indeed exhibit better length extrapolation.

Some readers might recall: wasn’t it said before that the Bias for the Key in Attention can be removed? Can it also be removed here? Regarding this question, one can refer to the Zhihu question "Why do some Vision Transformers not need bias in the key?". In fact, the conclusion that "the Key’s Bias can be removed" is specific to Attention without RoPE. Due to the existence of Softmax, the added bias can be canceled out: \begin{equation} \frac{e^{\boldsymbol{q}\cdot(\boldsymbol{k}_n + \boldsymbol{b})}}{\sum\limits_n e^{\boldsymbol{q}\cdot(\boldsymbol{k}_n + \boldsymbol{b})}} = \frac{e^{\boldsymbol{q}\cdot\boldsymbol{k}_n}e^{\boldsymbol{q}\cdot\boldsymbol{b}}}{\sum\limits_n e^{\boldsymbol{q}\cdot\boldsymbol{k}_n} e^{\boldsymbol{q}\cdot\boldsymbol{b}}}= \frac{e^{\boldsymbol{q}\cdot\boldsymbol{k}_n}}{\sum\limits_n e^{\boldsymbol{q}\cdot\boldsymbol{k}_n}} \end{equation} However, this "cancellation" depends on \boldsymbol{b} being independent of n. But from Equation [eq:bias], we know that after RoPE, \boldsymbol{b} effectively becomes a function of m and n, so it cannot actually be canceled out. Therefore, for models with RoPE, removing the Bias term will lead to different results.

Another question is: why bother exploring length extrapolation? Isn’t it possible to just fine-tune the model on longer samples? In fact, even for readers who hold this view, length extrapolation is beneficial. Setting aside computational power, better length extrapolation means that during fine-tuning, the gap with pre-training is smaller, making fine-tuning less prone to catastrophic forgetting. This is even more important for current LLMs. Of course, one can also think further: the ideal result is that a model trained on short texts can switch to long-text scenarios without loss of performance, or even with better performance.

Summary

This article shares an "unexpected" and interesting conclusion discovered by the author: the Bias term can enhance the length extrapolation of RoPE models! The seemingly insignificant Bias term can actually be linked to the length extrapolation of Transformers, making one marvel at the importance of details—sometimes minor details can play a key role.

For reprints, please include the original address: https://kexue.fm/archives/9577

For more details on reprinting, please refer to: "Scientific Space FAQ"